Presenting in a conference room overlooking the Chicago skyline, the team was upbeat and full of energy. The regional CIO for the Americas was in attendance, and the centerpiece of their presentation charted the rise of customer satisfaction scores (CSATs) for their IT Support services to record levels. Their opportunities to interact with this CIO had been limited since he’d taken the office a year prior, but they knew he considered world-class customer experience the most important metric of IT Support. They had this.

As the critical slide was delivered, the CIO sat back and crossed his arms.

“I don’t believe you.”

Shocked silence held the room: it wasn’t a matter of belief, they thought – it was data!

This IT leader, however, wasn’t questioning what the report said. Instead, despite every green SLA they could show him, he knew his customers weren’t truly happy.

The Watermelon Effect

The scene described above is hardly a unique one. IT executives across the globe encounter the dreaded “watermelon effect”, sometimes without even knowing it. It’s considered a tell-tale sign of an adolescent IT Support organization that has taken the first steps toward maturity.

How it Happens

- You realize you need to quantify employee satisfaction with IT services

- You develop and implement a method to gather the relevant data

- You create the reporting necessary to turn the data into consumable information

- You use that reporting to set and measure progress against a goal: a KPI or an SLA

- Your management team then sets out to achieve the KPIs and turn them green. Mission accomplished!

Yet like the CIO from our story, you realize your dashboard may be green on the outside, but just like a watermelon, the customers underneath the surface of your reports are red with anger. You’ve got a problem to solve and, like every good leader, you know the first step to solving a problem is understanding it.

Step One: Quantify the Red

The watermelon effect most often occurs when KPIs and SLAs are incorrectly applied. It is vital to first identify the correct or desired behavior before you start applying metrics. Many IT Support teams will mistakenly apply standard performance metrics and guidelines without going through the proper process first, which requires identifying Critical Success Factors (CSF) and Key Performance Indicators (KPIs) before defining Service Level Agreements (SLAs).

Victims of the watermelon effect frequently overemphasize time-specific and/or operational metrics without considering more business oriented variables. To avoid similar mistakes, we recommend beginning with a much more exploratory, user-focused approach:

Tactics:

To measure the right things, you must first identify which things matter the most to key stakeholders and, more specifically, which of those things most frequently leave them dissatisfied. Feedback and critiques may vary greatly, but can include things like process, tools, people or technologies. In instances where a stakeholder struggles to highlight their primary source of dissatisfaction, it’s often helpful to review actual tickets recently submitted by that stakeholder to identify the specific incidents that were handled in a unsatisfactory manner. Approaches to gathering important performance feedback include:

- Interviews

- Post-ticket survey results

- Periodic survey results/baselines

- Custom survey for dissatisfied stakeholders and their team(s)

- Focus Group

Step Two: Solve for Green

Once you’ve highlighted what matters and, as a subset, what needs fixing, you can begin putting the proper metrics in place. Including input from both satisfied and dissatisfied stakeholders, you will want to proceed with the following process:

- Describe and document the correct behavior

- Establish CSFs

- Establish KPIs

- Establish SLAs

- Review all old time-based metrics and look for exceptions and escalation factors that have not been considered.

- Ensure that business metrics are considered rather than IT Operational metrics only. Ensure you understand stakeholder goals and that you’re measuring the key factors that reinforce those outcomes.

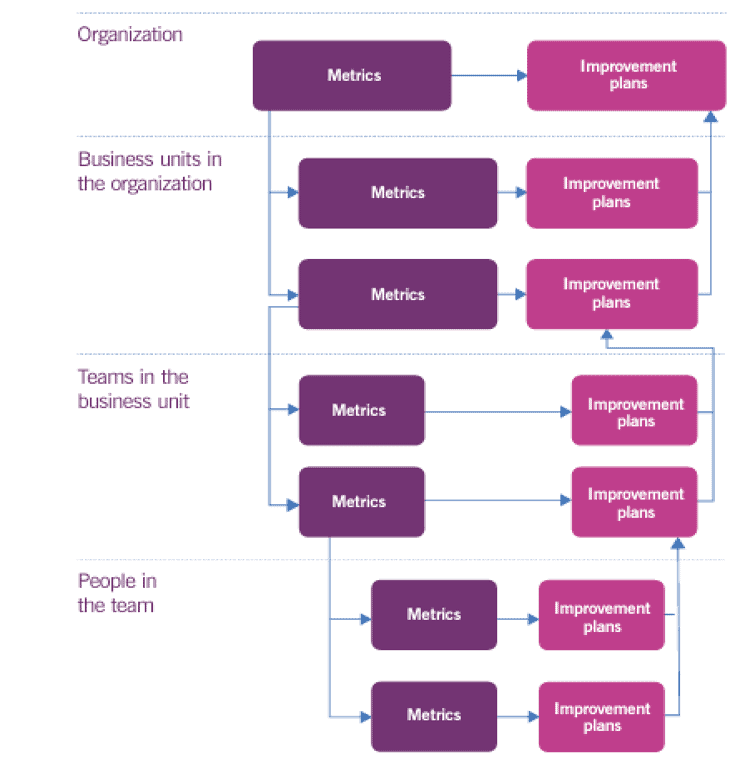

This deliberately hierarchical approach, and its specific emphasis on analyzing and reporting at multiple levels, creates opportunities to identify potential areas for?improvement and added value across the entire organization. The below diagram shows how proper?measurement?can drive improvement plans at each level, cascading all the way to high-level improvement planning. Again, though, none of this is possible without first understanding stakeholder goals and the metrics most relevant to achieving those goals:

Watermelon Effect, Example 1:

Despite all metrics being green, the CIO at EA was dissatisfied. Once his complaints were voiced, it was clear that the dissatisfaction was confined to his development teams and that it was only present when they were testing new games for release, specifically during the alpha and beta phases.

After conducting numerous in-depth discussions with the managers of these teams, and a brief survey among the developers themselves, one thing was obvious: the standard time-based SLAs that were currently in place – most notably response/resolution time – were not sufficient for the alpha and beta phases of the development cycle; those phases in particular required faster response and resolution times due to approaching launch dates and subsequent pressure to get fixes done.

In this case, the fix was fairly simple:

- Development team managers were to inform service managers when their teams entered alpha testing and also provide the launch date for the game.

- The manager would then insert a new rule into the ITSM system, which would increase the priority of any tickets submitted by that team during that time period.

- Agents/Technicians would know immediately that the team was in the testing stage and act accordingly to expedite and, if necessary, escalate those tickets to L2/3 faster.

In the beginning, team needs weren’t even understood, much less meaningfully tracked, measured and reported. But once the development teams were thoughtfully engaged, improvement was possible, the issues were resolved and user satisfaction soared.

Watermelon Effect, Example 2:

While providing IT Support as a Service for a large, multi-vertical regional hospital – the healthcare provider also owned a growing chain of primary-care clinics across multiple states – we encountered the dreaded “Watermelon Effect”:

All SLAs were green:

- Average Speed of Answer: Check

- Abandon Rate: Check

- Resolution Time: Check

We learned at our annual meeting, however, that the VP of Technology was receiving complaints about delays in opening new healthcare clinics during the Organization’s period of tremendous growth, in part due to late-arriving IT equipment. The operational metrics that delivered us a 98% customer satisfaction rating were not translating into success in this vital aspect of the business.

We recommended a cross-departmental Key Performance Indicator (KPI) based on new clinics opening on schedule with shared responsibility between project management, facilities and IT. The goal was to ensure that at least 98% of new clinics would open on time. We pulled and reviewed all tickets associated with new clinic openings and scheduled appropriate follow-up meetings with facilities and project management.

It’s important to emphasize, we approached our new, business and outcome-based KPI with shared responsibility, which required an increase in cooperation and collaboration to fully understand and resolve. Sharing responsibility for an important and measured goal to be reported on to the head of IT broke down silos and exposed a wider audience to the full business, value-based picture.

In the end, to accommodate the unprecedented growth the organization was experiencing, IT needed to build a larger stockpile of available workstations and other relevant equipment and prioritize their delivery and setup. Project management needed to provide us with a list of expected openings, clinic size, specialized equipment details, and opening dates. And Facilities needed to immediately inform not just IT, but Project Management as well when it was ready to begin equipment installations. An “Open New Clinic” workflow was created in ServiceNow, which collected all required information from all parties, notified each user of progress and allowed us to report on the new KPI.

Again, the “Watermelon Effect” taught us the importance of prioritizing business-based metrics in addition to operational ones, and the need to update such metrics as the organization evolves over time.

Step Three: Find the New Red

Ensuring quality customer experience on a sustained basis requires an embrace of continuous improvement. To protect against complacency and continually add value, we recommend the following eight-step process:

- Ongoing meetings with “frequent fliers” and customers/teams with highest dissatisfaction level

- Thorough analysis of post-ticket survey results

- Thorough analysis of periodic survey results/baseline

- Quick manager outreach to dissatisfied customers, stakeholders and their team(s)

- Understand the goals/metrics of the teams you support and keep the information

- current, updated annually at least (it will change!).

- Create and maintain a Continual Improvement Register

- Create/Update and maintain a user knowledge base that collects user feedback

- Analyze user KB feedback, take appropriate remediation actions, address with users

- and provide feedback

Onshore offers a range of IT Support services including consultation, team extension, fully managed IT Support functions and more. To learn more about us, our model, our mission, and our services, please schedule an appointment with one of our business development executives.